Validate a Model

After you train a model, you can validate it in the Validation tab. This topic describes how to configure validation parameters, validate models, and view the validation results.

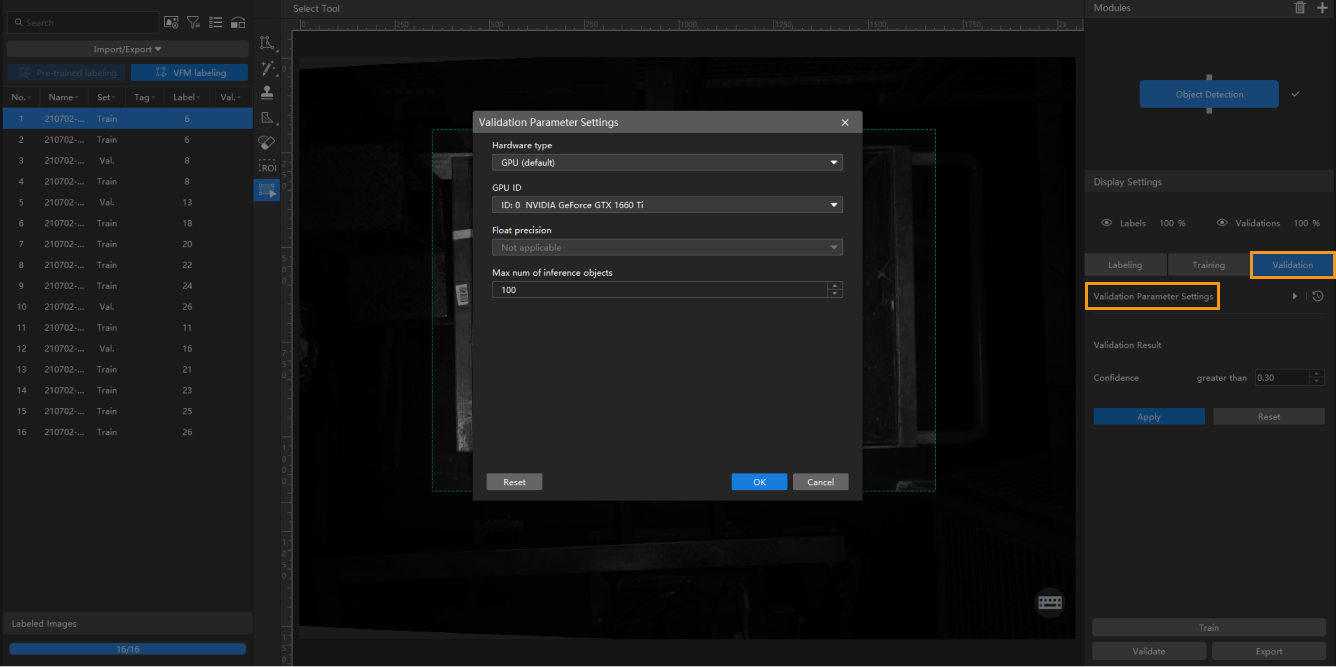

Configure Validation Parameters

Click ![]() to open Validation Parameter Settings.

to open Validation Parameter Settings.

You can configure the following parameters:

-

Hardware type

-

CPU: Use CPU for deep learning model inference, which will increase inference time and reduce recognition accuracy compared with GPU.

-

GPU (default): Do model inference without optimizing according to the hardware, and the model inference will not be accelerated.

-

GPU (optimization): Do model inference after optimizing according to the hardware. The optimization only needs to be done once and is expected to take 1–20 minutes.

-

-

GPU ID

The graphics card information of the device deployed by the user. If multiple GPUs are available on the model deployment device, the model can be deployed on a specified GPU.

-

Float precision

-

FP32: high model accuracy, low inference speed.

-

FP16: low model accuracy, high inference speed.

-

-

Max num of inference objects (only visible in the Instance Segmentation module and Object Detection module)

The maximum number of inference objects during a round of inference.